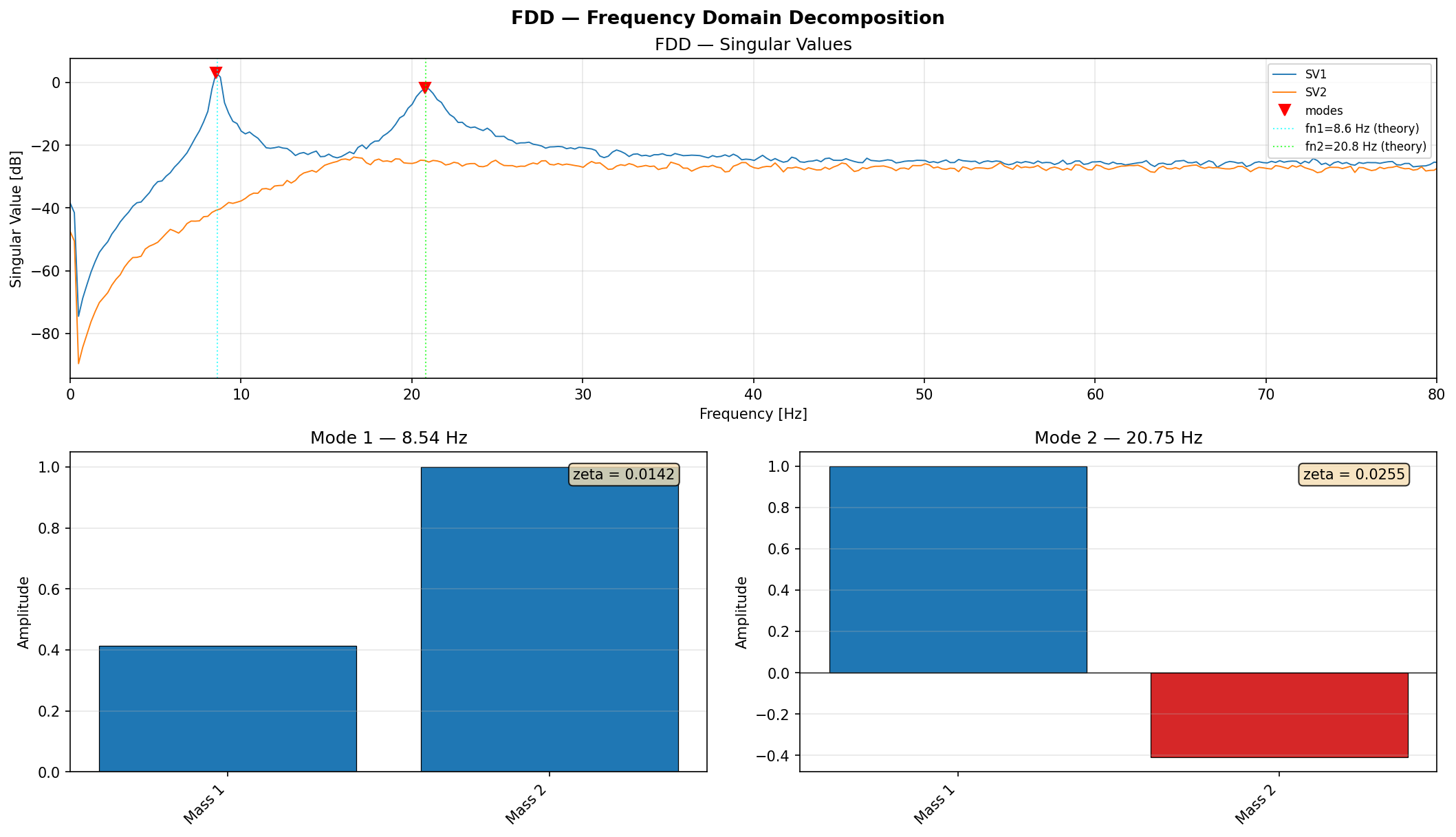

FDD — Frequency Domain Decomposition

Operational Modal Analysis via Frequency Domain Decomposition. Identifies natural frequencies, mode shapes, and damping ratios from output-only (ambient vibration) data.

dspkit.fdd.fdd_svd(data, fs, window='hann', nperseg=None, noverlap=None, detrend='constant')

Compute the SVD of the PSD matrix at each frequency line.

This is the core FDD computation. The first singular value curve

(S[:, 0]) is the primary tool for identifying natural frequencies.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

data

|

(array_like, shape(n_channels, N))

|

Multi-channel time series. Each row is one sensor. |

required |

fs

|

float

|

Sampling frequency [Hz]. |

required |

window

|

str

|

Window function for Welch estimation (default |

'hann'

|

nperseg

|

int or None

|

Welch segment length. Defaults to |

None

|

noverlap

|

int or None

|

Overlap between Welch segments. |

None

|

detrend

|

str or False

|

Per-segment detrending. |

'constant'

|

Returns:

| Name | Type | Description |

|---|---|---|

freqs |

(ndarray, shape(M))

|

Frequency vector [Hz]. |

S |

(ndarray, shape(M, n_channels))

|

Singular values at each frequency. |

U |

(ndarray, shape(M, n_channels, n_channels), complex)

|

Left singular vectors. |

Source code in dspkit/fdd.py

dspkit.fdd.fdd_peak_picking(freqs, S, prominence=None, distance_hz=None, max_peaks=None, freq_range=None)

Pick natural frequency peaks from the first singular value curve.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

freqs

|

(ndarray, shape(M))

|

Frequency vector [Hz] (from |

required |

S

|

(ndarray, shape(M, n_channels))

|

Singular values (from |

required |

prominence

|

float or None

|

Minimum peak prominence in dB. Peaks with less prominence are

discarded. Default |

None

|

distance_hz

|

float or None

|

Minimum distance between peaks [Hz]. |

None

|

max_peaks

|

int or None

|

Return at most this many peaks (most prominent first). |

None

|

freq_range

|

(float, float) or None

|

Restrict peak search to this frequency range [Hz]. |

None

|

Returns:

| Name | Type | Description |

|---|---|---|

peak_freqs |

ndarray

|

Natural frequencies [Hz]. |

peak_indices |

ndarray of int

|

Indices into |

Source code in dspkit/fdd.py

104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 | |

dspkit.fdd.fdd_mode_shapes(U, peak_indices, normalize=True)

Extract mode shapes at the identified natural frequencies.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

U

|

(ndarray, shape(M, n_channels, n_channels), complex)

|

Left singular vectors from |

required |

peak_indices

|

array_like of int

|

Indices of the natural frequencies (from |

required |

normalize

|

bool

|

If |

True

|

Returns:

| Name | Type | Description |

|---|---|---|

modes |

(ndarray, shape(n_modes, n_channels), complex)

|

Mode shapes. |

Source code in dspkit/fdd.py

dspkit.fdd.efdd_damping(freqs, S, U, peak_indices, fs, mac_threshold=0.8, n_crossings=10)

Enhanced FDD damping estimation via inverse FFT of the SDOF bell.

For each identified mode: 1. Extract the singular value bell around the peak by checking the Modal Assurance Criterion (MAC) with the peak mode shape. 2. Inverse-FFT the bell to get the free-decay autocorrelation. 3. Count zero-crossings and fit the logarithmic decrement to estimate the damping ratio.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

freqs

|

(ndarray, shape(M))

|

Frequency vector [Hz]. |

required |

S

|

(ndarray, shape(M, n_channels))

|

Singular values from |

required |

U

|

(ndarray, shape(M, n_channels, n_channels), complex)

|

Left singular vectors from |

required |

peak_indices

|

array_like of int

|

Indices of the natural frequencies. |

required |

fs

|

float

|

Sampling frequency [Hz]. |

required |

mac_threshold

|

float

|

MAC threshold for including frequency lines in the SDOF bell. Default 0.8. |

0.8

|

n_crossings

|

int

|

Number of zero-crossings to use for damping estimation. Default 10. |

10

|

Returns:

| Name | Type | Description |

|---|---|---|

damping_ratios |

(ndarray, shape(n_modes))

|

Estimated damping ratios (fraction of critical). |

natural_freqs |

(ndarray, shape(n_modes))

|

Refined natural frequency estimates [Hz] from zero-crossing counting. |

Source code in dspkit/fdd.py

219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 | |